I pay for AI.

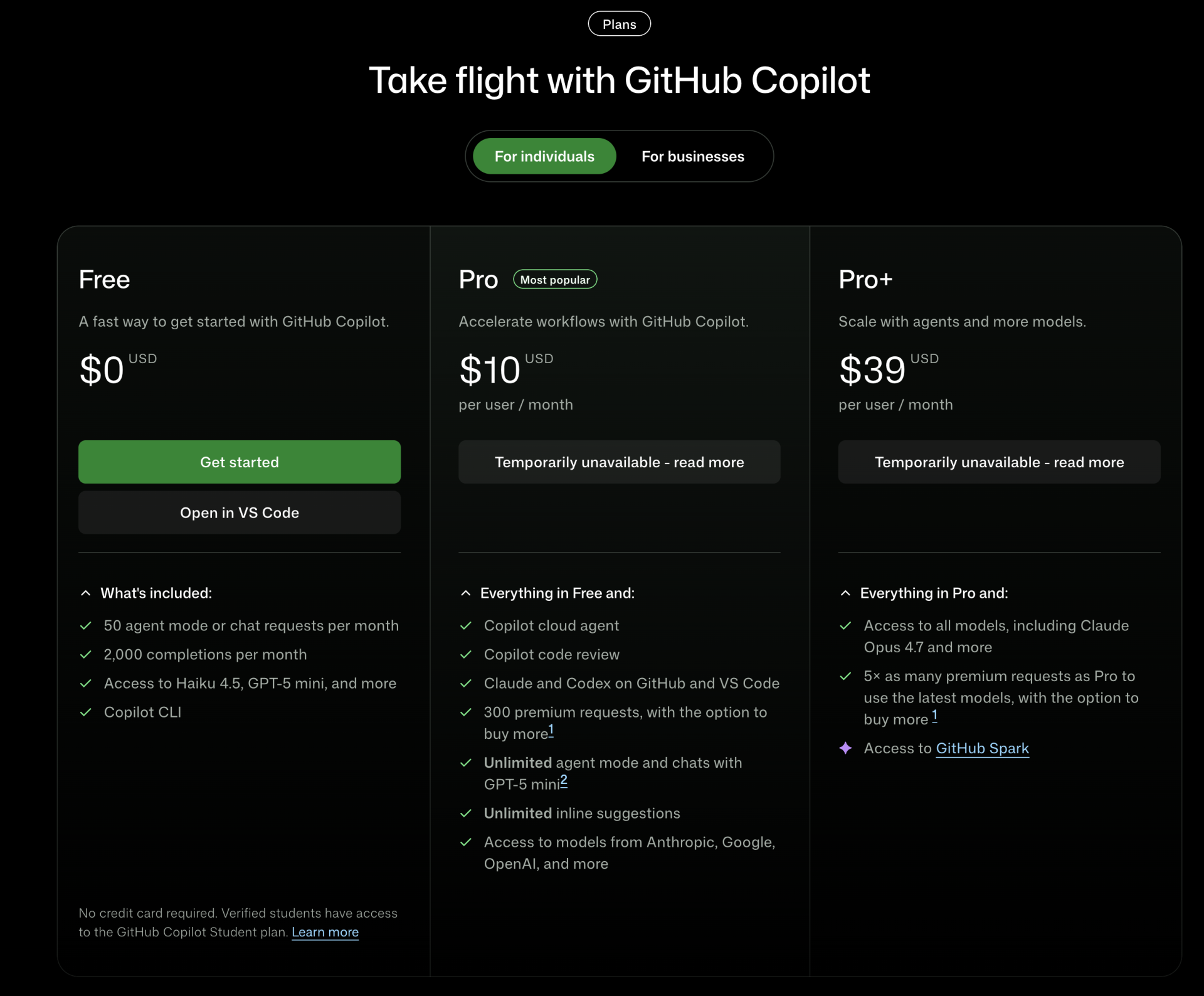

That sentence alone would come as a shock to those who know me personally. No free AI; I actually gave money to a company, my hard-earned money, to gain access to both proprietary and open source LLMs. I changed my GitHub Education plan to “Pro” after they removed access to Copilot. It felt like a defeat.

For years, I had lived the life of a digital parasite, thriving on student discounts, generous trial periods, obscure Chinese providers that I could only navigate by Chrome’s “Translate into English”, and – my favorite – the noble art of API key rotation: Finding a provider who offers a certain amount of tokens that resets on a daily basis, and then registering 10 times to receive 10 different API keys.

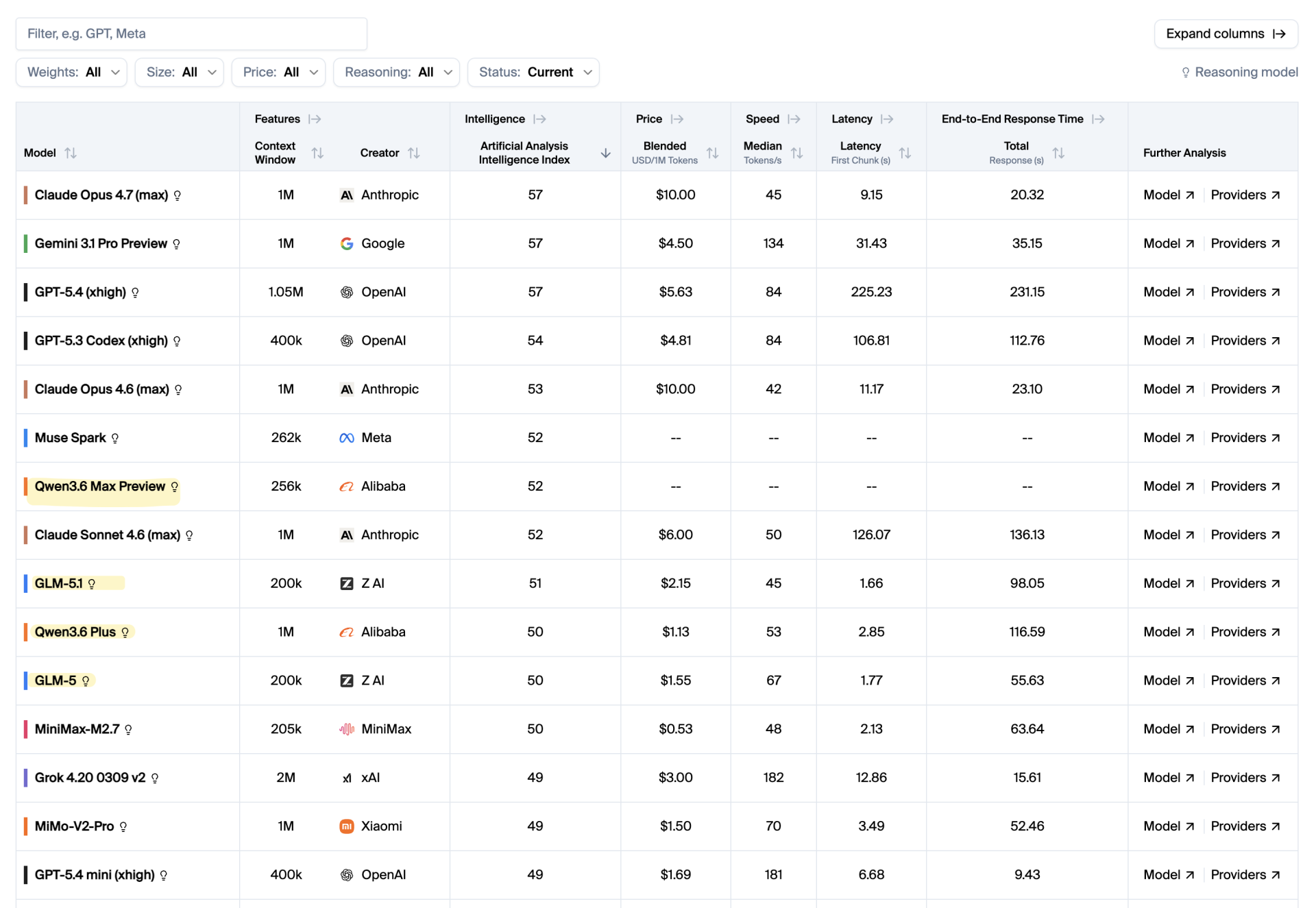

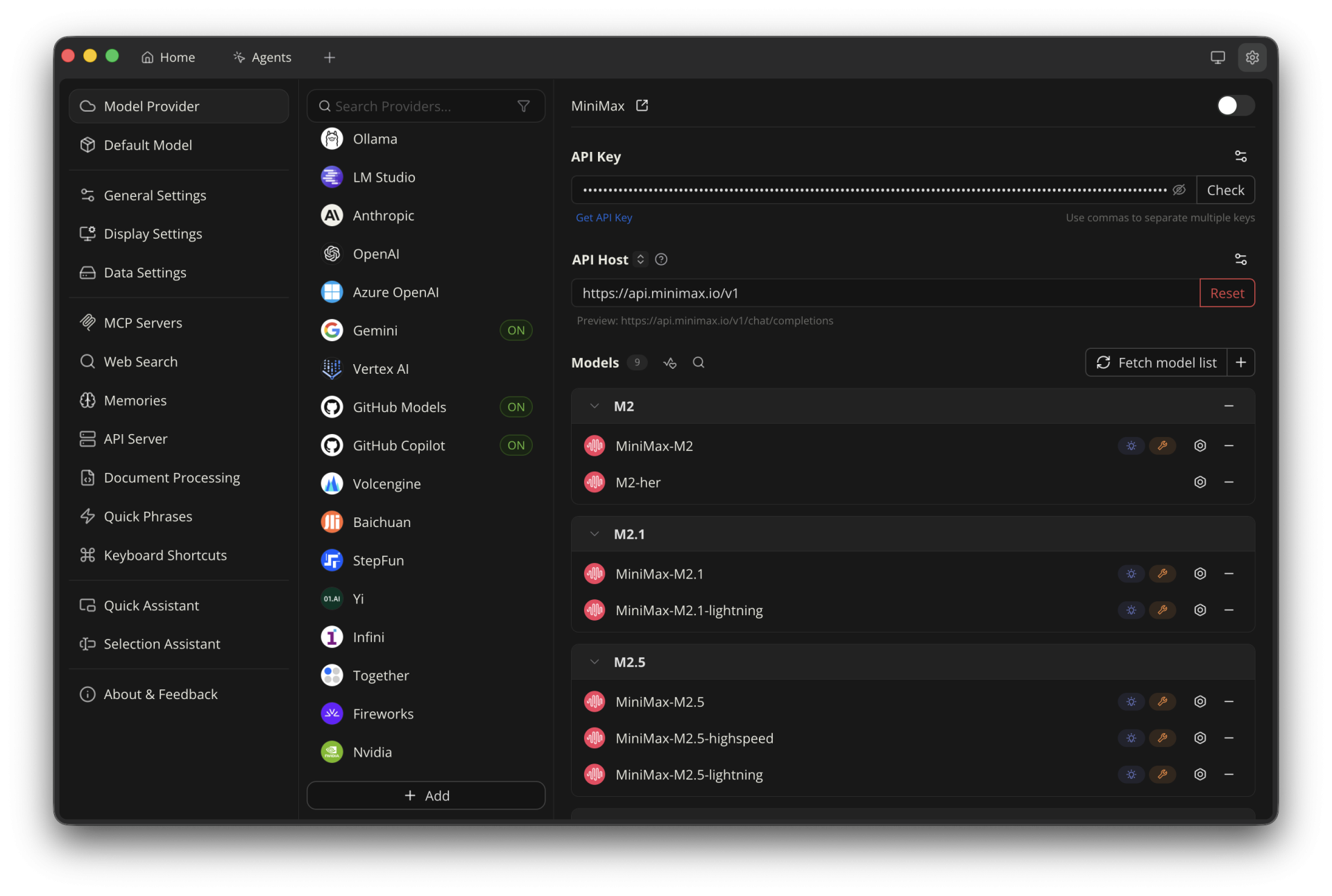

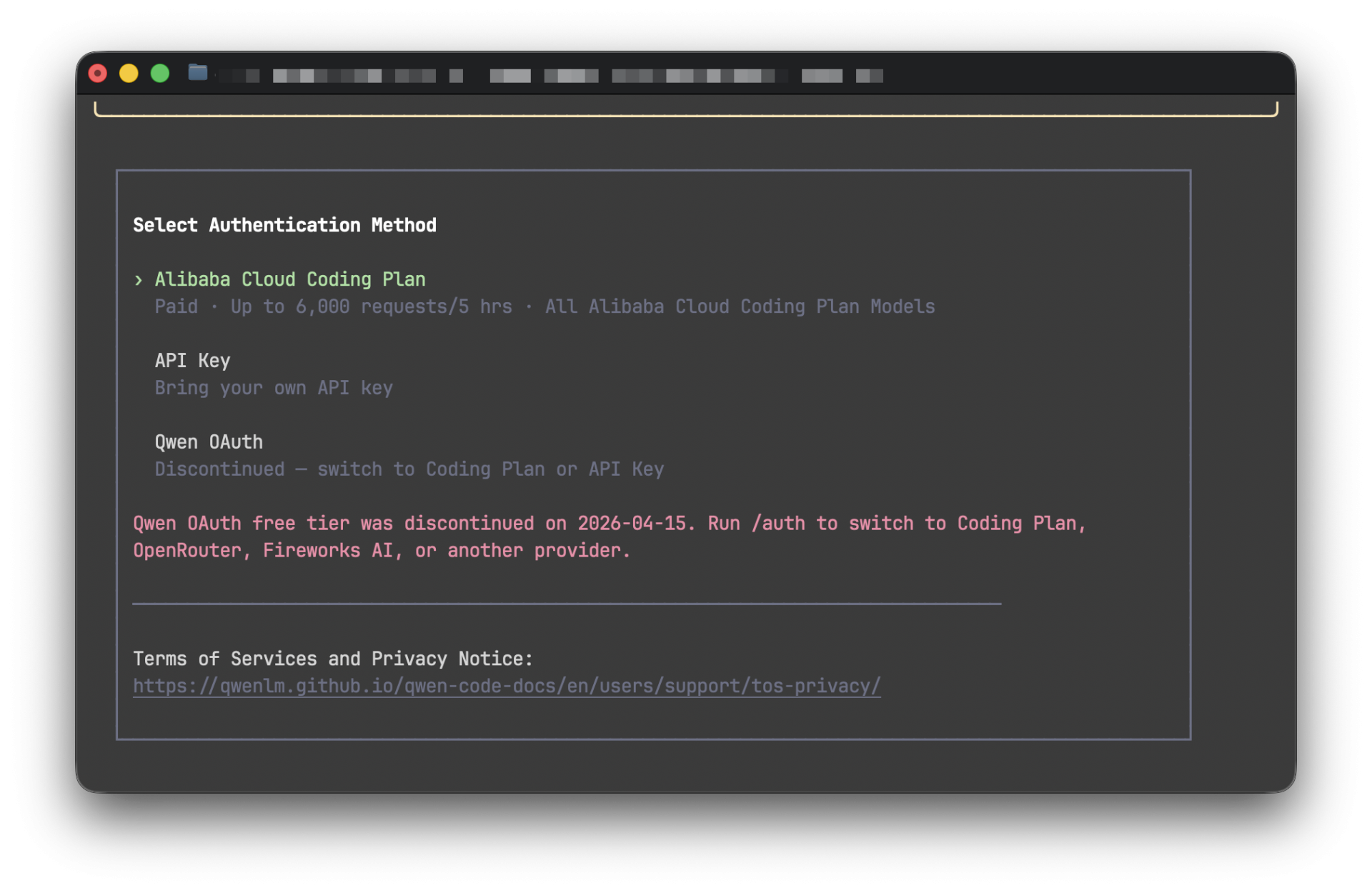

Through iFlow’s CLI, iflow, I had an almost unlimited amount of tokens for open-source models that ranked at the top of every open-source benchmark: First GLM-4.7, then Kimi K2 Thinking, MiniMax 2.5, later GLM-5, and most recently GLM-5.1. Then there was Qwen, or, as I was used to typing it into my CLI, qwen. Qwen itself doesn’t have the absolute best models, I must admit, despite its plethora of LLMs that seemed to be being churned out on a daily basis. But they were fast and tailored to your needs. qwen3-coder-plus was a great tool to have for getting things done quickly and reliably.

All in all, I had access to probably every LLM – open source or not – that made it into the Leaderboard. And for all of them, I paid absolutely nothing. Not a single transaction happened; I simply registered more accounts with throwaway emails or even throwaway phone numbers. I managed them all in Cherry Studio, an app that bridges the gap between mere conversations and actual coding. The list of providers was so long that I only had to randomly click on one, then click the “Get API key…” link, and it led me straight to some provider whose names I immediately forgot, signed up multiple times, and kept rotating the fuck out of their API keys. You may hate me now.

That era, ladies and gentlemen, seems to be coming to an end.

The Subreddit as a Canary in the Coal Mine

Since I was and still am an avid user of GitHub Copilot – with no more student discount despite being verified -, I subscribed to its unofficial subreddit, GitHub Copilot. This subreddit was also where I found the infamous email informing all “students” that they were separating the Copilot feature from the education plan and simply giving them access to the already-free base account, which is as useful as a sixth finger.

The email, which arrived on March 12 and was “effective March 12, 2026”, caused a flood of posts on the GHCP subreddit. Little did they know there was much worse to come. It had actually been there the entire time, in the email that every “student” read thrice because of disbelief and confusion:

(…) we will be making additional adjustments to available models or usage limits on certain features (…)

Back then, this sentence was either overlooked or misinterpreted as in “we’re trying to balance this out and maybe opt for generous usage limits instead of taking away your beloved Claude Opus 4.6.” Check out the subreddit today, and I promise you’ll find at least 5 posts on the first page about “rate limits”.

Their complaints are justified – mostly. I have very little pity left for vibe-coders who were running multiple instances of Copilot requests to basically do everything for them. But soon, actual students from Pakistan, like a good friend of mine who had just started learning the new technology and relied on free AI tiers, were unexpectedly rate-limited at a rapid pace.

One might be inclined to forget who’s actually behind GitHub. It is Microsoft. Yes, that Microsoft that collected lawsuits like Pokémon cards, with its most infamous one in 1998, a landmark antitrust lawsuit accusing Microsoft of abusing its monopoly power by bundling its Internet Explorer browser with the Windows operating system. The 11-hour-long deposition video is still on YouTube, exposing a young Bill Gates trying to talk his way out of what it was: a fucking monopoly.

I don’t think I need to add anything more as to why Microsoft + GitHub + Copilot is a terrible formula. The effects, however, are felt, whether you like Microsoft or not (who the fuck likes Microsoft anyway 🤔). Their latest coup, barely a few hours old at the time I’m writing this: Claude Opus has been removed from the GitHub Pro plan and moved to Pro+, the one people paid around $10 for to get, well, access to models like these.

And if you thought rate limiting, free AI trials, or other methods to squeeze as much AI out of Copilot are the sole, legendary dumb decisions of an ultra-capitalistic behemoth, you’re wrong. All of the models I’ve mentioned above are now behind a paywall or limited so much that working with them is nearly impossible. No more free AI for me. iFlow had at least the decency to inform people in advance that they’re shutting down the iflow CLI on April 17. The others? “Enter your API key here…”

A’ight, let’s talk about rate limiting for a second. I hate it, users hate it, and definitely /r/GitHubCopilot hates it, too. However, all this nerfing of once world-class models and the systematic destruction of GitHub’s Copilot plan are just a symptom of something much bigger and darker to come.

Everyone who’s ever used anything but ChatGPT.com knows that AI requires energy. Enormous amounts of energy. So much so that people start making plans to put data centers in space or to reopen recently closed nuclear facilities. But the data centers still on planet Earth are already at the maximum of their capacity, partly due to grandmas creating “funny” images or the occasional image of the US President who depicts himself as Jesus, whose bare hand can cure the sick.

And energy is where the problem lies (not with Trump per se, even though one could make the argument that anything this geriatric man with hair like mummified foreskin ends up worse than it was before, so much so that his own military advisors locked him out of a room during a rescue mission of a pilot shot down over Iran because “Trump screamed at aides for hours”).

The Great Scaling Back

For the better part of two years, AI providers played the “growth at all costs” LARP with remarkable enthusiasm. They subsidized compute, incinerated mountains of venture capital like it was a renewable resource, and handed out access to frontier models as free AI like a street vendor handing out samples outside a tourist trap. The pitch was simple: get everyone hooked, worry about monetization later. It was the SaaS playbook, but turbocharged and run by people who apparently believed that “later” was someone else’s problem.

Well. Later has arrived. And it brought a spreadsheet.

The VC spigot hasn’t dried up entirely, at least not at the time I’m writing this, but the pressure to demonstrate actual revenue, meaning real money from real customers, not just “annualized run rate” accounting gymnastics, is mounting at every major AI lab. The entire idea of a free tier is being systematically dismantled. What was once a generous playground is becoming a controlled, highly restricted waiting room. You can sit, but don’t get too comfortable, and please note the subscription plans on your way out.

Poe, Compute Points, and the Theater of Generosity

Take Poe, for example. I had five working API keys rotating to use the back then recently released and cheap Gemini 3 Flash that allowed me basically unlimited usage of that model as my default chat model – free AI. What started as an impressively versatile aggregator (a single interface to Claude, GPT, Mistral, and whatever else Quora felt like plugging in that week) has gradually mutated into a convoluted “compute point” economy.

Poe is a master in obfuscation. Instead of just saying “you get X messages per day,” they invented a currency. Compute points. It sounds vaguely scientific, almost noble, like you’re managing actual computational resources rather than just watching a progress bar drain toward a paywall. Different models cost different amounts of points, essentially the same business model that OpenRouter has been riding on for years. Some responses cost more based on length. It’s gamified poverty, not free AI.

The message is clear: your access to “free AI” was never free. It was a very specific, very finite ration of digital oxygen. Once it’s gone, they’d like your credit card number, please.

Even the Chinese Giants Are Building Fences

Then there’s the contingent we thought might play a different game entirely. The Qwen ecosystem and the broader wave of Chinese AI development initially arrived with the energy of someone aggressively trying to steal market share at any cost, offering free AI left and right. Open weights, generous API tiers, competitive pricing – it had the whiff of a geopolitical strategy dressed up as altruism. And maybe it was. But running H100-equivalent clusters at scale swallows the same humongous amount of energy as it does in the West, and it isn’t a hobby for the bored billionaires. It’s expensive infrastructure, and infrastructure demands return.

The fences are going up. The walled gardens are being reinforced with each model generation. “Open source” is rapidly becoming a term that means “you can inspect the weights, but good luck running them on anything you can actually afford.” The open-source dream is slowly being carved into “open enough to build hype, closed enough to make money.”

I’m not writing this to be cynical. It’s just a pattern I see day in, day out. We’ve seen this movie before. Remember “serverless servers”, which were obviously servers, but hosted in “the cloud” where they could massively scale up or down and, if not under constant watch, can bankrupt an entire company? They, too, started out with every platform that ever offered generous access to developers before discovering that developers don’t pay the server bills.

AI as a Utility, and What That Actually Means for You

The industry is completing its pivot from “AI as a world-changing miracle” to “AI as a boring, commoditized utility.” And utilities – be they electricity, water, broadband, or now high-end LLMs – share one charming universal trait: once you’re dependent on them, they will monetize every single drop until your infrastructure budget looks like a hostage negotiation.

Think about it. Ten years ago, AWS was the scrappy alternative. Now it’s the default, and the bills are large enough that entire engineering teams exist purely to optimize them. The same trajectory is playing out with AI, just compressed into a fraction of the time because compute costs are indefinitely higher and the pressure to monetize is much more acute.

The “AI engineer” of 2025 who built their entire workflow on a free AI Claude account and a rotating cast of open API keys is about to experience the same rude awakening that the “serverless evangelist” of 2018 had when the Lambda bills arrived.

The Part Where I Admit I’m Part of the Problem

Look, I paid my $11.95 (incl. VAT). I did it with the resigned energy of a man who knows he’s lost a game he didn’t know he was playing. I’m not angry at GitHub. Microsoft needs to eat too, and the Copilot product is, well, let’s call it fine – that is, if you’re even lucky to get one. It does what it says on the tin. The autocomplete is good, the chat integration is useful, and “Claude Sonnet 4.6 inside my IDE” is a sentence I didn’t think I’d be writing without wincing.

But I’m acutely aware that $10 is probably just the opening bid. GitHub just stopped all new registrations for their “Student”, “Pro”, and “Pro+” plans. The tiers will multiply. The limits will tighten further for free users. The Pro plan will quietly become what the free plan used to be, and a new “Pro+” will materialize above it with a straight face. “Free AI” will be a phrase of the past. This is how it works. This is how it has always worked.

So, What Now?

So, where does that leave us? Two pieces of advice: If you’re still riding the free-tier wave in 2026, you’re basically walking around with a “kick me” sign on your back. Enjoy the sunshine while it lasts, and it won’t last for long, that is already clear.

Get your budget in order. Free AI isn’t coming back. AI isn’t a perk; it’s standard equipment. And “optional” infrastructure has a nasty habit of becoming a non-negotiable line item overnight. That era of “almost free” AI was just a collective fever dream fueled by irrational exuberance and sheer stupidity.

The dream’s over. The bill is coming. I’ve already cleared mine. You’d better go check your own. The price probably just went up while you were reading this.